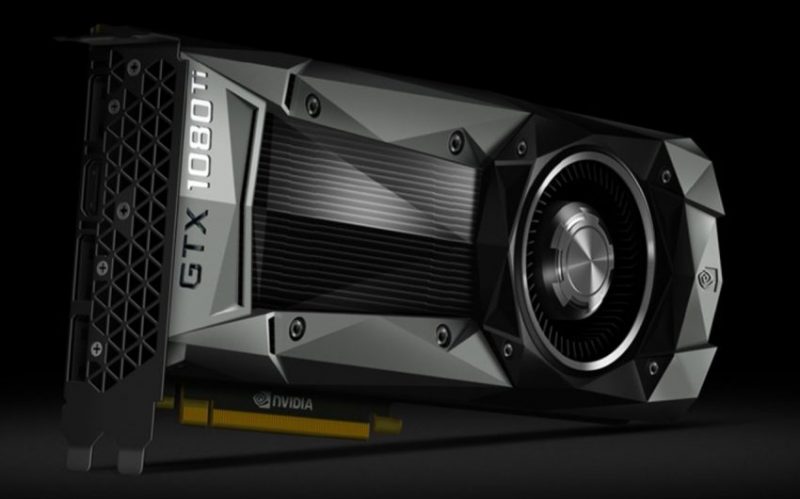

If you have ever build an editing or a gaming PC, chances are you have used Nvidia cards at some stage. They may not be the cheapest, but the company has always been at the bleeding edge of what is possible in the world of computer graphics cards. And their latest creation is no different – the new NVIDIA GTX 1080 Ti offers 35 percent faster performance compared to the company’s previous GeForce GTX 1080, and is even faster than the Nvidia TITAN X.

The new Nvidia GTX 1080 Ti pushes the 4K editing and 4K Gaming boundaries even further by being built on the industry’s cutting-edge FinFET process. Its 12 billion transistors deliver a dramatic increase in performance and efficiency, and it bristles with 3,584 NVIDIA CUDA cores and a massive 11GB frame buffer running at an unheard of 11Gbps.

NVIDIA GeForce GTX 1080 Ti Highlights

- GPU Architecture: Pascal

- Frame buffer: 11 GB GDDR5X

- Memory Speed: 11 Gbps

- Boost Clock Actual: 1582 MHz

With its whopping 11 GB of VRAM it should make minced meat out of most, if not all, 4K footage including Raw (of course, this depends on the overall performance of the machine and other components, beyond the scope of this article, but you get the idea – the new GTX 1080 Ti is a seriously fast Graphics card).

Of course, the announcement of the new GTX 1080 Ti powerhouse may bring some tears in the eyes of recent GTX 1080 or (worse) Titan X owners, since apparently the new card “smokes” both when it comes to “oomph” based on early reviews and benchmarks.

You can see some here and here.

Of course, it’s not all doom and gloom for GTX 1080 and Titan X owners, just because there is a new faster, and cheaper (in the case of the Titan X) card out there doesn’t automatically make the existing graphics cards by NVIDIA perform any worse then they are now.

I see the same happening in the Pro camera game – the new URSA Mini Pro was announced recently out of nowhere, much to the dismay of some, but not all, original URSA 4.6K Mini owners. With technology it’s always a give and take – there will always be the “next big thing”, which will be faster and cheaper until it gets outperformed by the next, next product. Chasing the latest and greatest is rarely a win-win situation.

The new GeForce GTX 1080 Ti has been designed to handle the highest graphical demands of 360 VR experiences, and is also 4K VR-ready.

Heat dissipation and power consumptions are huge concerns when it comes to super-fast GPU’s of course, and the new Nvidia GTX 1080 Ti runs as cool as it looks. That’s thanks to superior heat dissipation from a new high-airflow thermal solution with vapour chamber cooling, 2x the airflow area and a power architecture featuring a seven-phase power design with 14 high-efficiency dualFETs.

NVIDIA GeForce GTX 1080Ti Full Specs:

GPU Engine Specs:

- NVIDIA CUDA Cores: 3584

- Base Clock (MHz): 1480

- Boost Clock (MHz): 1582

Memory Spec:

- Memory Speed: 11 Gbps

- Standard Memory Config: 11 GB GDDR5X

- Memory Interface Width: 352-Bit

- Memory Bandwidth (GB/sec): 484

Technology Support:

- Simultaneous Multi-Projection – Yes

- VR Ready – Yes

- NVIDIA Ansel – Yes

- NVIDIA SLI® Ready Yes – SLI HB Bridge – Supported

- NVIDIA G-Sync-Ready – Yes

- NVIDIA GameStream™-Ready Yes

- NVIDIA GPU Boost™ 3.0

- Microsoft DirectX – 12 API with feature level 12_1

- Vulkan API – Yes

- OpenGL 4.5

- Bus Support PCIe – 3.0

- OS Certificates – Windows 7-101, Linux, FreeBSDx86

Display Support:

- Maximum Digital Resolution: 7680 x 4320@60Hz

- Standard Display Connectors: DP 1.43, HDMI 2.0b

- Multi Monitor: Yes

- HDCP: 2.2

Graphics Card Dimensions:

- Height 4.376″

- Length 10.5″

- Width 2 Slot

Thermal and Power Specs::

- Maximum GPU Temperature (in C): 91

- Graphics Card Power (W): 250 W

- Recommended System Power(W): 600 W

- Supplementary Power Connectors: 6-pin, 8-pin

So, in summary – if you are looking to start building that mega 4K editing beast of a PC or Hackintosh, you may want to consider the new Nvidia GTX 1080 Ti.

There will be an NVIDIA Founders Edition also, which along the regular version, will be available worldwide on March 10, from NVIDIA GeForce partners, including ASUS, Colorful, EVGA, Gainward, Galaxy, Gigabyte, Innovision 3D, MSI, Palit, PNY and Zotac.

Pricing is set at $699 and £699 respectively. To pre-order head over to NVIDIA.

Disclaimer: As an Amazon Associate partner and participant in B&H and Adorama Affiliate programmes, we earn a small comission from each purchase made through the affiliate links listed above at no additional cost to you.

Claim your copy of DAVINCI RESOLVE - SIMPLIFIED COURSE with 50% off! Get Instant Access!

to clarify – non of the current pascal cards are supported by Hackintosh and clover confing so you’re stuck with past gen. 980ti